Terminus.

An AI research assistant that only gives answers it can prove; built from a real client need and shipped at a hackathon.

Believe

AI has a trust problem

AI tools like ChatGPT are incredible. But they have one big flaw: sometimes they make things up. They give you a confident answer that sounds completely right, but is completely wrong. In AI, this is called a "hallucination."

For casual questions, that's annoying. For researchers writing papers, doctors reviewing studies, or engineers checking specifications, it's dangerous. A fake source or a made-up statistic doesn't just waste your time. It can ruin your credibility.

That question didn't come out of thin air. It came from a real person with a real need.

Understand

Meeting the client who needed this

Through the Rockwell Entrepreneurship Fellowship at RMU, I was paired with Kris Rockwell as a real-world client. The fellowship connects students with industry professionals to work on real problems — not classroom exercises.

I met with Kris twice. During those conversations, he explained what he needed: an AI system that could be trusted in environments where accuracy isn't optional — places like healthcare, engineering, and academic research.

He laid out five problems he kept running into:

Privacy and security

Sensitive research data can't be sent to cloud AI services. The tool needs to run locally, on your own machine.

Hallucinations

Most AI tools make claims they can't back up. In research, every statement needs a real source behind it.

Domain depth

General-purpose AI doesn't go deep enough for specialized research. It gives surface-level answers when you need expert-level detail.

Cost

API-based AI tools are expensive to run at scale. Small research teams and university labs can't afford enterprise pricing.

Offline access

Research teams need tools that work without internet. Field researchers, secure labs, and remote teams can't rely on cloud connections.

Ideate

Building an AI that shows its work

The idea behind Terminus is simple: instead of generating answers from memory (which is where hallucinations come from), make the AI read real research papers first, then answer based only on what it found.

Here's how it works:

This approach is called RAG — Retrieval-Augmented Generation. In plain English: the AI reads the actual papers before it answers you, and shows you exactly where its answer came from. No guessing. No making things up. Every answer has a receipt.

The key difference from regular AI: if Terminus can't find a source for something, it tells you it doesn't know instead of making something up. That honesty is the whole point.

How it's built

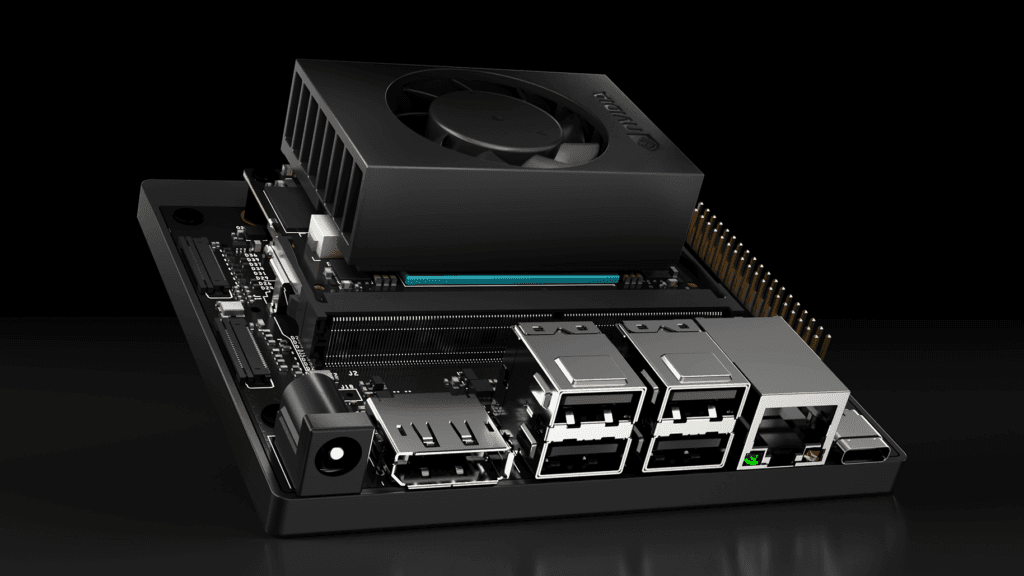

Everything runs locally on a standard laptop. No cloud, no API costs, no data leaving your machine.

Document ingestion

Peer-reviewed papers are loaded, processed, and converted into searchable chunks using sentence transformers for embeddings.

RAG engine

When you ask a question, the system searches the paper database and pulls the most relevant sections; not the whole paper, just what matters.

Local AI model

Ollama3 runs entirely on your machine. It generates responses strictly from the retrieved documents; never from general training data.

Citation validator

Every claim in the response is cross-checked against the source paper. If it can't be verified, it doesn't make it into the answer.

Tech stack

Python

Ollama3

(Local LLM)

LangChain

RAG

Architecture

ChromaDB

FAISS

Gradio

Interface

Listen

Two weeks of building and mentoring

This wasn't a team project in the usual sense. I led the project solo, with one teammate, a freshman I was mentoring through the process. My job was to build the core system while teaching them how product development actually works.

Over two weeks, I went from Kris's requirements to a working prototype. The process was straightforward:

Week 1: Built the document ingestion pipeline and got the RAG engine working. This was the hardest part, making sure the system could accurately find relevant sections across hundreds of pages of research papers. I tested it constantly, checking whether the retrieved passages actually answered the question or just contained similar keywords.

Week 2: Connected the local LLM, built the citation validator, and created the interface. The citation validator was critical, it checks every claim the AI makes against the actual source. If it can't verify something, that claim gets removed from the answer.

The biggest lesson from working with my mentee: explaining your decisions to someone else forces you to make better decisions. Every time I had to explain why I was building something a certain way, I either confirmed my logic or realized I needed to rethink it.

Deliver

What I shipped

The final prototype was fully functional and demonstrated at the hackathon. I tested it with real research questions to prove it works:

Example query: "What is the environmental impact of PLA vs traditional plastics?"

Terminus searched its database, found relevant studies from real journals, and returned a detailed answer with each claim linked to its source, including author, journal name, and publication year.

Why this matters

Terminus isn't just a hackathon demo. It's a proof of concept for a bigger idea: AI tools can be trustworthy if you design them to show their work.

Right now, most people can't tell when AI is making something up. Terminus was my attempt to fix that; to build a tool where you can trust the answer because you can see the proof. It runs locally, costs nothing, and never makes claims it can't back up.

For me personally, this project brought together everything I've been working toward: identifying a real problem through client conversations, building the right solution, mentoring a teammate through the process, and shipping something that actually works.

Takeaways

What I took away from this

01

The best projects start with a real person's real problem. Terminus didn't come from a textbook assignment; it came from sitting across from Kris and listening to what he actually needed.

02

Explaining your work to someone else makes it better. Mentoring a freshman while building forced me to justify every decision. That made the final product stronger.

03

Focus beats features. Terminus does one thing; answers research questions with verified sources. We didn't add user accounts, saved history, or a fancy UI. That focus is what made it work.

03

You don't need the cloud to build something powerful. Terminus runs entirely on a regular laptop. Sometimes the simplest deployment is the best one.